Large Language Models (LLMs) have become the backbone of modern artificial intelligence, powering everything from chatbots to search engines and enterprise solutions. But with great power comes great vulnerability. Cybercriminals have found ways to poison these models, manipulating outputs and injecting malicious intent into AI-driven interactions. LLM poisoning isn’t just a theoretical risk—it’s an emerging threat with real-world consequences that could undermine trust in AI and disrupt industries.

Understanding LLM Poisoning

At its core, LLM poisoning refers to the act of corrupting a language model’s training data or influencing its learning process to produce biased, misleading, or outright harmful outputs. This manipulation can occur at various stages, including during pre-training, fine-tuning, or real-time interactions where models continuously learn from user input.

Cybercriminals exploit vulnerabilities in LLMs by introducing malicious data, which can subtly or overtly skew results. This isn’t merely an academic concern—there have already been documented cases where attackers have manipulated AI models to spread misinformation, bypass security filters, and even generate offensive content.

How LLM Poisoning Happens

- Data Injection Attacks – Attackers introduce harmful data into publicly available datasets, tricking LLMs into learning incorrect or dangerous patterns.

- Backdoor Attacks – Malicious actors embed hidden triggers in the training data, causing the LLM to respond in a specific, harmful manner when prompted with certain inputs.

- Model Distortion – Attackers target reinforcement learning mechanisms, systematically biasing AI outputs toward their desired narrative.

- Adversarial Prompt Engineering – By carefully crafting queries, cybercriminals can force LLMs to generate misleading or harmful responses.

- Fine-Tuning Exploits – Attackers manipulate fine-tuning processes to embed biased or unethical behaviors into otherwise neutral models.

Each of these methods poses a significant risk, particularly in industries that rely heavily on AI-generated insights, such as finance, healthcare, and cybersecurity itself.

Real-World Impacts of LLM Poisoning

Industry-Wide Consequences

| Industry | Potential Risks from LLM Poisoning |

|---|---|

| Healthcare | Misleading medical advice, fake clinical research, patient misinformation |

| Finance | Market manipulation, fraudulent investment guidance, phishing scams |

| Cybersecurity | Bypassed security protocols, automated phishing attacks, data breaches |

| Journalism | Spread of false information, deepfake-enhanced disinformation campaigns |

| Legal | Fabricated legal precedents, misleading legal interpretations |

Cybercriminals can leverage poisoned AI models to sway elections, create highly convincing deepfake propaganda, and even manipulate automated trading systems to trigger financial disruptions. The stakes are high, and the need for robust defenses has never been greater.

The Role of Data Poisoning in Cybercrime

One of the most alarming aspects of LLM poisoning is its potential to supercharge existing cyber threats. Traditional phishing attacks, for instance, rely on social engineering and human error. However, with access to a manipulated LLM, attackers can automate highly personalized phishing campaigns, creating messages that mimic real users with near-perfect accuracy.

Consider the implications of an AI model trained on poisoned data in the context of business email compromise (BEC). Instead of manually crafting fraudulent emails, attackers could use an LLM to generate contextually aware, industry-specific emails that pass traditional security filters.

Case Study: The Rise of AI-Generated Phishing

A cybersecurity firm recently uncovered a campaign where an LLM-powered chatbot was being used to automate spear-phishing attacks. Attackers fed the model manipulated data, enabling it to generate emails that mimicked a company’s internal communication style. Employees were tricked into divulging credentials, believing they were interacting with a legitimate system.

Defending Against LLM Poisoning

Given the scale and complexity of LLM poisoning, organizations must adopt a multi-layered defense strategy. Some of the most effective countermeasures include:

1. Robust Data Curation

Ensuring that training data comes from verified, high-quality sources can minimize the risk of unintentional poisoning. Regular audits of datasets and filtering out suspicious inputs are essential steps in safeguarding AI integrity.

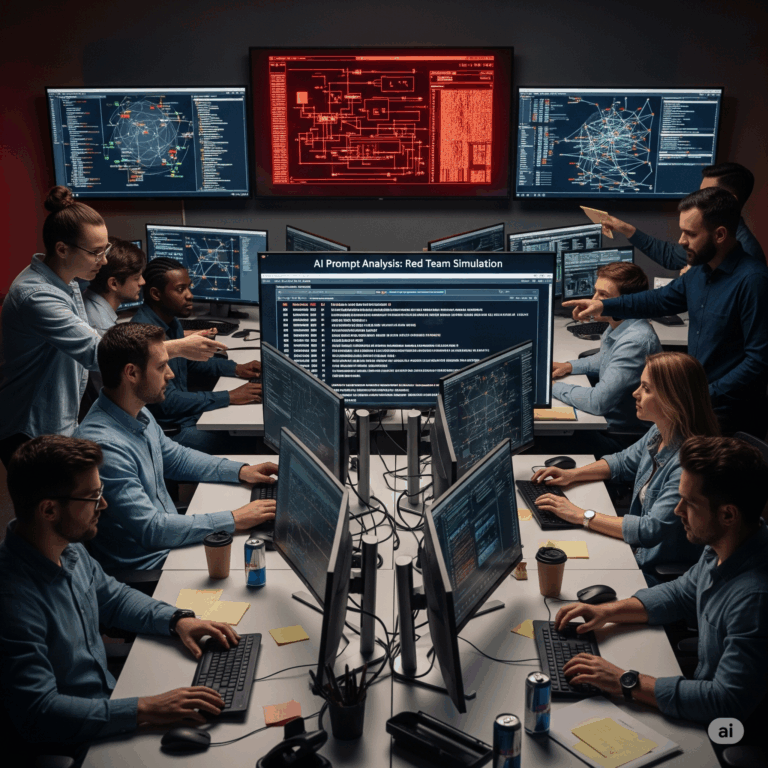

2. AI Model Validation & Testing

Security teams should regularly test AI models for anomalies, employing adversarial testing techniques to identify potential backdoors or biases introduced by malicious actors.

| Validation Method | Purpose |

| Adversarial Testing | Simulating attacks to uncover vulnerabilities |

| Bias Detection Tools | Identifying and mitigating biased outputs |

| Red Team AI Audits | Ethical hacking teams stress-testing models |

| Continuous Monitoring | Detecting real-time deviations in model behavior |

3. Access Control & Input Monitoring

Implementing strict controls over who can fine-tune and interact with AI models can reduce exposure to malicious actors. Additionally, monitoring real-time input data for signs of manipulation can help catch poisoning attempts early.

4. Cryptographic Integrity Checks

Applying cryptographic methods such as hash verification can ensure that training data remains untampered. Organizations can use blockchain-based solutions to maintain immutable records of dataset integrity.

5. AI Explainability & Transparency

Enhancing AI explainability through transparent methodologies allows researchers to track how decisions are made. If an LLM suddenly starts generating biased or harmful content, having a traceable decision-making process can help pinpoint the root cause.

The Future of LLM Security

As AI adoption grows, so too will the threats targeting it. Researchers are actively developing AI-specific security frameworks, and regulators are beginning to explore policies to govern AI safety. However, the responsibility also falls on organizations leveraging AI to ensure their models are resilient to manipulation.

One promising development is the rise of self-healing AI, where models can identify and correct poisoned inputs autonomously. Additionally, collaborations between industry leaders, academia, and cybersecurity firms are fostering better threat intelligence sharing, enabling proactive defenses against AI poisoning.

The fight against LLM poisoning is far from over, but with the right strategies and safeguards in place, organizations can mitigate risks and continue leveraging AI safely. By staying vigilant and proactive, we can ensure that LLMs remain a force for innovation rather than a tool for exploitation.

References Cited:

- Zhang, Q. et. al. “Human-Imperceptible Retrieval Poisoning Attacks in LLM-Powered Applications.” 2024

- OWASP. “Data and Model Poisoning.” GenAI Security Project, 2025.

- Nightfall.AI. “Data Poisoning.” AI Security 101.

- Futurism Technologies. Beyond Intelligence: The Rise of Self-Healing AI. Jan 2024