Artificial intelligence is revolutionizing cybersecurity, altering how businesses, governments, and individuals manage risk and ensure compliance in an increasingly digital world. As cyber threats grow more sophisticated, traditional approaches to risk management and compliance struggle to keep pace. AI offers solutions that are not only faster and more efficient but also adaptive and predictive, providing a powerful tool in the fight against cybercrime. However, with its promise comes significant challenges, including ethical considerations, regulatory gaps, and the risk of over-reliance on automated systems.

The Growing Cyber Threat Landscape

Cybersecurity has never been more critical. Ransomware attacks, data breaches, and state-sponsored cyber warfare have escalated in both scale and impact. In the past, organizations relied on rule-based security systems and human analysts to detect and mitigate threats. While effective to some degree, these methods are increasingly inadequate against the complexity of modern cyber threats. AI is changing this dynamic by introducing machine learning models that analyze massive datasets, detect anomalies, and respond to threats in real time.

Unlike traditional security tools, AI-driven systems can identify patterns that may go unnoticed by human analysts. For example, AI can detect subtle signs of credential stuffing attacks or insider threats by recognizing behavior deviations. This proactive approach enhances an organization’s ability to anticipate and mitigate threats before they escalate into full-blown security incidents.

AI in Risk Management: Predictive and Adaptive Security

Risk management is inherently about preparation, assessment, and response. AI-driven tools transform this process by introducing predictive capabilities that can assess vulnerabilities before they become exploits. Machine learning algorithms analyze historical attack data, identifying trends that indicate future risks. This predictive intelligence allows businesses to shore up their defenses before a breach occurs.

Additionally, AI enhances adaptive security frameworks that evolve based on new threats. Traditional security policies require constant manual updates to remain effective. AI, however, can automatically refine security protocols by learning from past incidents. This dynamic adaptation ensures that security strategies remain relevant even as cyber threats evolve.

For instance, financial institutions use AI-driven fraud detection systems that continuously refine their algorithms based on new fraud patterns. By leveraging AI, banks can flag suspicious transactions in real time, reducing financial losses and protecting customer data.

AI’s Role in Compliance: Automating and Strengthening Regulatory Adherence

Compliance with cybersecurity regulations is a growing challenge for organizations, especially as regulatory frameworks evolve to address new threats. AI is streamlining compliance processes by automating tasks that were traditionally labor-intensive and error-prone.

For example, organizations subject to GDPR, HIPAA, or the California Consumer Privacy Act (CCPA) must ensure the secure handling of sensitive data. AI-powered compliance tools can automatically scan networks for non-compliant data storage practices, flagging potential violations before they become legal liabilities. These systems also generate real-time compliance reports, reducing the burden on compliance teams.

Moreover, natural language processing (NLP) tools help organizations stay up to date with regulatory changes. AI can analyze legal texts, extract relevant clauses, and highlight actionable compliance requirements. This is particularly beneficial for multinational corporations that must navigate complex and overlapping regulatory landscapes.

Ethical Considerations and Risks of AI in Cybersecurity

Despite its advantages, AI in cybersecurity raises ethical and operational concerns. One of the most pressing issues is the potential for AI systems to produce false positives or false negatives. Overly aggressive AI-driven security measures can mistakenly flag legitimate activities as threats, disrupting business operations. Conversely, an undetected threat due to AI misclassification can lead to catastrophic breaches.

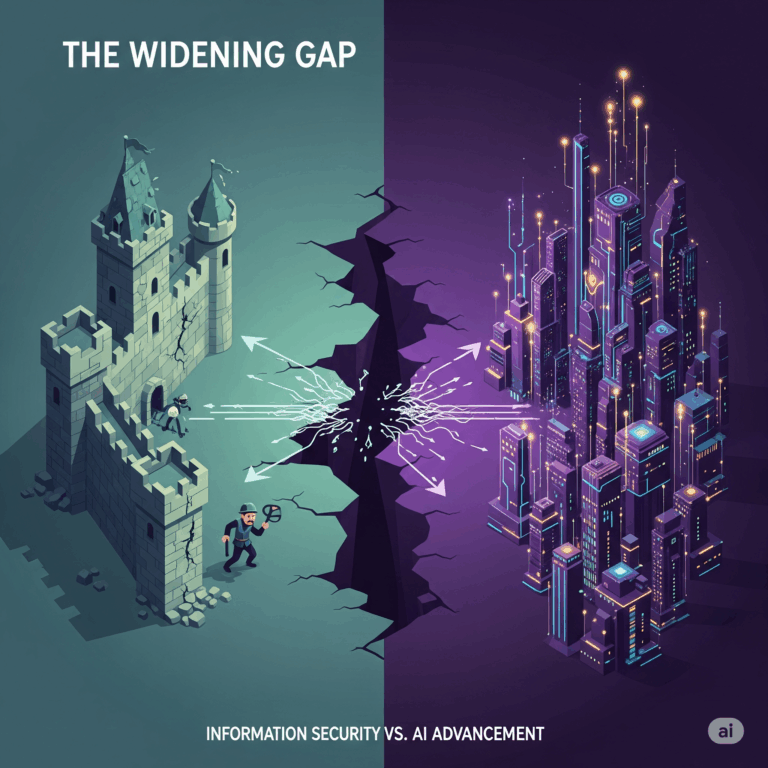

Another major concern is the risk of AI weaponization. Cybercriminals are already leveraging AI to enhance their attack strategies, creating more sophisticated phishing scams, deepfake-based social engineering, and automated malware that can evade detection. As defensive AI improves, so do offensive AI capabilities, resulting in an ongoing technological arms race.

There is also the question of accountability. When AI-driven security systems fail, determining responsibility becomes a challenge. Who is to blame when an AI model fails to detect a breach— the security team, the software vendor, or the AI itself? Addressing these ethical and legal questions is crucial as AI adoption in cybersecurity grows.

The Future of AI in Cyber Risk Management and Compliance

Looking ahead, AI’s role in cybersecurity will only expand. The integration of AI with blockchain technology, for example, could create more transparent and immutable security frameworks. Decentralized AI models could reduce reliance on single points of failure, enhancing resilience against cyber threats.

Additionally, advancements in explainable AI (XAI) will improve transparency in AI-driven decision-making. Organizations and regulators will demand greater insight into how AI models reach their conclusions, ensuring accountability and reducing bias in automated security measures.

Collaboration between governments, private sector entities, and AI researchers will be critical in shaping policies that balance innovation with security. Regulatory frameworks must evolve alongside AI advancements to mitigate risks without stifling technological progress.

Conclusion

AI is undeniably transforming cyber risk management and compliance, offering unprecedented efficiency, adaptability, and predictive capabilities. However, as with any transformative technology, it brings challenges that require careful consideration. Ethical dilemmas, regulatory hurdles, and adversarial AI threats must be addressed to ensure that AI remains a force for good in cybersecurity. By harnessing AI responsibly, organizations can build more resilient defenses, protect critical infrastructure, and navigate an increasingly complex cyber landscape with confidence.

References Cited:

- Medium. “The Basics of Decentralized AI: How It Works and Its Impact”

- ISC2. “The Ethical Dilemmas of AI in Cybersecurity”.

- World Journal of Advanced Engineering and Technology and Sciences. “The Role of AI in Information Security Risk Management.”