As artificial intelligence (AI) becomes increasingly embedded in critical workflows—ranging from military operations to financial services—maintaining the ability for humans to resume control is not just a desirable feature but a critical safety requirement. Responsible AI implementation demands that systems are built with deliberate mechanisms that enable human intervention, interruption, and command reassumption at any point during operation.

This article examines the technical, procedural, and governance practices needed to ensure that humans can rapidly and effectively retake control of AI systems when necessary, even under high-pressure conditions.

The Criticality of Resumable Human Control

In complex environments, unexpected conditions, adversarial manipulation, or system drift can cause AI outputs to become dangerous or inappropriate. If human operators cannot quickly regain control, the consequences may include:

- Catastrophic mission failure

- Public safety hazards

- Massive financial or reputational losses

As the U.S. Department of Defense Ethical Principles for AI state, humans must have “the ability to disengage or deactivate deployed systems that demonstrate unintended behavior”¹. Without resumable control mechanisms, organizations risk deploying systems that could spiral into unintended outcomes.

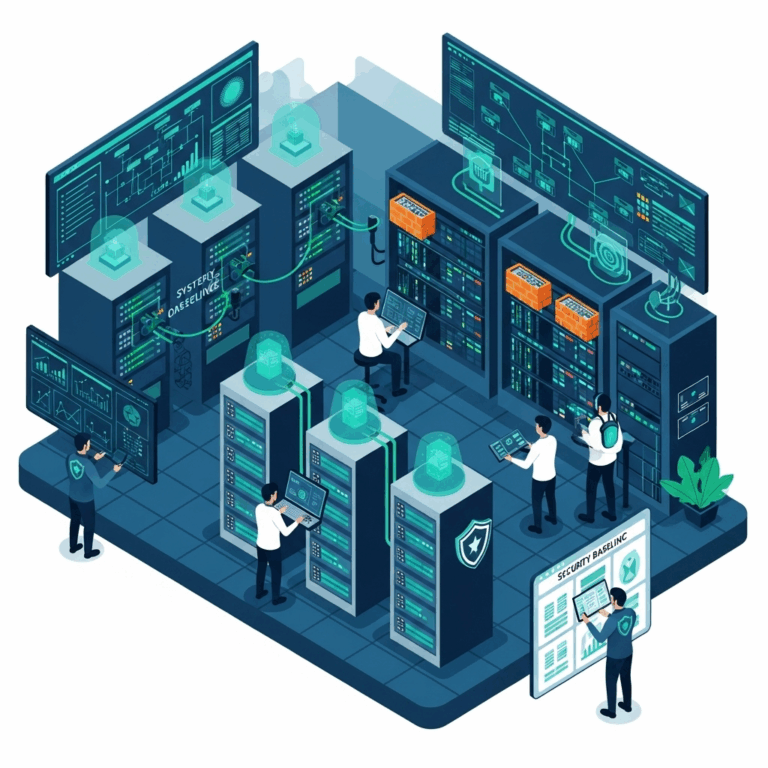

Key Design Strategies for Human Control Resumption

Pre-Designed Intervention Points

AI systems should be architected with predefined points where human intervention is expected and possible. These include:

- Manual override triggers accessible to qualified personnel

- Pause buttons on user interfaces for non-critical halting

- Emergency shutdown mechanisms for critical failure scenarios

Embedding these options early in the design phase avoids complicated retrofitting later.

Role-Based Access and Authorization

Not every user should be able to resume control. Proper design includes:

- Tiered access controls: Granting override authority to trained personnel

- Multi-factor authentication before executing critical control resumption

- Audit logs recording every manual intervention attempt

Role-based designs balance safety with necessary security measures.

Confidence Threshold Triggers

AI systems should dynamically assess their confidence levels. When confidence falls below a set threshold:

- Decision-making automatically escalates to a human operator

- System shifts to “awaiting human input” mode

- Critical actions are paused until validated manually

This dynamic approach prevents low-confidence AI from continuing operations unchecked².

Operational Practices for Human Control

Rigorous Human Training

Operators must be trained not just to monitor AI but to:

- Detect anomalies and system drift

- Recognize when intervention is necessary

- Execute resumption procedures smoothly

Organizations should simulate “loss of control” drills similar to aviation or military readiness exercises³.

Clear Escalation Protocols

Written protocols must define:

- When an operator is obligated to take control

- How to escalate control issues within organizational chains

- Who assumes command during contested or ambiguous scenarios

Clear, actionable escalation guidelines reduce confusion during crises.

Monitoring Interfaces Optimized for Intervention

User interfaces (UI) for AI monitoring should:

- Highlight anomalies or unusual patterns visually

- Display confidence scores and risk indicators in real-time

- Make control override options prominent and accessible

A well-designed UI reduces the human reaction time required for control resumption.

Testing Human Resumption Capabilities

Realistic Scenario Simulations

Testing control resumption must involve:

- Failure mode exercises replicating real-world failure conditions

- Adversarial attack simulations to practice regaining control under cyber threat

- Time-to-intervention benchmarks to measure operator readiness

Ongoing training validates whether systems—and human teams—are prepared for real-world challenges.

Post-Intervention Audits

Every manual control resumption should be:

- Logged and documented in a forensic system

- Reviewed by an oversight committee for process improvement

- Analyzed for lessons learned to update procedures

Continuous learning strengthens overall operational resilience.

Technological Enablers of Control Resumption

Several technical strategies can assist in ensuring humans can resume control:

- Dual-operating modes (autonomous/manual) for critical systems

- Edge computing fallback allowing local human control if cloud systems fail

- Redundant communication links to prevent control loss due to network outages⁴

Leveraging these technologies creates a more robust human-AI partnership.

Challenges and Considerations

While vital, human resumption of control also brings challenges:

- Latency: Human response times may be slower than needed in milliseconds-scale environments

- Automation complacency: Operators may over-trust AI and delay intervention

- Complex system understanding: Operators must maintain deep familiarity with increasingly complex AI systems

Training, system design, and cultural emphasis on vigilance can help mitigate these issues⁵.

What’s Next in This Series?

In the upcoming articles in the Responsible AI Implementation series:

- Proper Human Training for AI System Engagement

- Proper AI Use in Critical Infrastructure

- A Summary of Responsible AI Implementation and Starting Points

We will explore how training programs can better prepare humans to work alongside and manage AI systems.

References Cited:

1 U.S. DoD Ethical Principles for AI

2 NIST Trustworthy and Responsible AI

3 RAND Corporation: Building Resilient AI Systems

4 MIT Technology Review: How to Maintain Human Control Over AI

5 Brookings: Automation Bias and Human Control