Artificial intelligence (AI) systems continue to integrate into high-impact sectors such as healthcare, finance, national security, and critical infrastructure. In these sensitive environments, the margin for error is slim, and the cost of failure can be catastrophic. To ensure safety and public trust, one of the core design imperatives of responsible AI is to preserve human control of quality throughout the AI lifecycle.

This article explores the architectural and procedural mechanisms that enable meaningful human oversight, prevent automation bias, and ensure systems remain adjustable, auditable, and aligned with human-centered outcomes.

Why Human Control Matters in AI Systems

AI systems are designed to make autonomous decisions based on patterns in large datasets. But even the most advanced algorithms operate within parameters set by human intent, data quality, and system goals. Without human control mechanisms, the risks include:

- Runaway automation in life-critical systems

- Opaque decision-making that cannot be audited

- Degraded accountability when outcomes go wrong

According to the NIST AI Risk Management Framework¹, ensuring human control of quality is central to mitigating risks tied to unpredictability, ethical failure, and system drift.

Design Principles That Preserve Human Oversight

Explainability by Design

An essential foundation is making models interpretable. That means embedding:

- Model cards: Documentation that explains model purpose, training data, and known limitations

- Local explainability tools (e.g., LIME, SHAP): Allowing real-time audit of AI decisions

- Dashboards for decision traceability: Showing which inputs contributed to outputs

These tools ensure that human stakeholders can understand what the system is doing—and why.

Control Breaks and Human-in-the-Loop Functions

To retain human agency, AI must support:

- Interrupt mechanisms: The ability for a human to pause or stop automated processes

- Escalation paths: When confidence scores drop below threshold, systems should route decisions to humans

- Role-based override capabilities: Allowing senior operators to intervene at key decision points

These design elements prevent AI from acting unchecked in ambiguous or high-risk scenarios.

Scenario Testing and Quality Gates

Pre-deployment testing should simulate real-world conditions to identify potential failure modes. Key strategies include:

- Red team assessments for adversarial scenarios

- Ethical AI audits using diverse stakeholder input

- Quality gates that block models from deployment until they pass human-reviewed benchmarks

This approach ensures AI systems meet both technical and ethical quality standards before going live.

Monitoring AI Quality Post-Deployment

Designing for human control doesn’t stop after launch. Continuous oversight is essential. This includes:

Drift Detection and Alerting

AI systems degrade over time as input distributions change. To preserve quality:

- Model drift detection tools monitor for input/output divergence

- Performance thresholds trigger alerts when accuracy or fairness declines

- Human review panels re-evaluate model efficacy regularly

Ongoing monitoring protects against silent failure and reinforces accountability.

Logging and Forensic Traceability

Every decision made by an AI system should be logged with metadata, including:

- Input data used

- Confidence scores

- Time of decision

- Responsible modules or subsystems

This forensic audit trail is critical for post-incident investigation and regulatory review².

Feedback Loops to Human Operators

AI systems should incorporate feedback channels where humans can:

- Flag bad outcomes or anomalies

- Suggest model adjustments

- Provide context or override decisions

This iterative learning loop strengthens both system quality and operator trust.

Aligning AI Goals with Human Values

Beyond mechanics, responsible AI demands that system objectives match human-defined goals. This requires:

Value Alignment During Design

System designers must involve stakeholders to define success criteria. This includes:

- Public policy alignment in government systems

- Ethical constraints for healthcare and justice use cases

- Societal impact assessments before deployment

Ensuring the AI’s utility function is aligned with the broader public good is a non-negotiable element of human control³.

AI Ethics Review Boards

Cross-functional review boards can evaluate AI deployments for:

- Unintended harm

- Demographic bias

- Regulatory misalignment

These boards provide an institutional safeguard against ethical drift.

Tools That Enhance Human Control of Quality

Several commercial and open-source tools now exist to support human oversight, including:

- IBM Watson OpenScale – Offers fairness and explainability dashboards

- Google’s What-If Tool – Visual interface for model testing and behavior analysis

- Microsoft Responsible AI Dashboard – Combines interpretability, counterfactuals, and performance tracking

Integrating such tools into the development and monitoring workflows enhances auditability and human control⁴.

Risks of Neglecting Human Oversight

Without human control mechanisms, organizations risk:

- Regulatory penalties under frameworks like the EU AI Act

- Loss of public trust due to unexplainable outcomes

- Systemic failures caused by model drift or incorrect assumptions

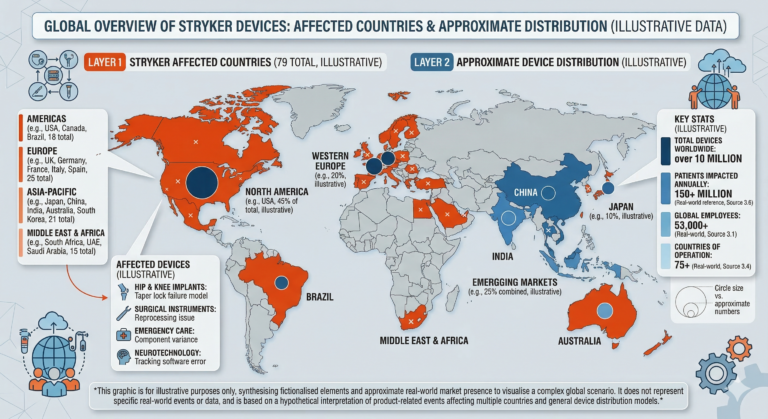

Recent real-world failures—such as biased AI in judicial sentencing or healthcare triage—underscore the urgency of designing for human control⁵.

What’s Next in This Series?

Next in the Responsible AI Implementation series:

- Ensuring Humans Can Resume Control of Key AI Functions

- Proper Human Training for AI System Engagement

- Proper AI Use in Critical Infrastructure

- A Summary of Responsible AI Implementation and Starting Points

We’ll dive into what it takes to restore human control once an AI system is running — even under emergency or failure conditions.

References Cited:

1 NIST AI Risk Management Framework

2 Harvard: Explainability in AI Systems

3 Stanford HAI: Aligning AI With Human Values

4 Microsoft Responsible AI Resources

5 Brookings: Lessons from AI Failures