We’re at a pivotal moment with Artificial Intelligence. It’s clear that AI is going to reshape industries, improve lives, and create immense value, but with that power comes significant responsibility. That’s why the concept of AI guardrails isn’t just important; it’s fundamental to how we build and deploy these systems responsibly.

Understanding AI Guardrails

Think of AI guardrails as the core principles and mechanisms that keep an AI system, especially a large language model, aligned with its intended purpose and ethical boundaries. It’s about more than just preventing technical glitches; it’s about ensuring the AI doesn’t produce unintended or harmful outputs. This means establishing clear acceptable use policies for everyone interacting with AI, developing robust incident response plans for when things go wrong, and integrating AI management into existing IT governance frameworks.

We need to treat AI not as a magical black box, but as another powerful piece of technology. Just as we’ve learned to manage software applications and cloud services, we need to bring AI into the fold of our security, compliance, and operational processes. The funding is certainly there for AI, which is fantastic, but the real work now is in deploying it securely and thoughtfully.

Starting with Purpose: The Use Case First Approach

Before you even think about the technology, you have to ask: What problem are we trying to solve with AI? Are we building new AI-powered services for customers, or are we looking to boost internal productivity with tools like Copilot? This might sound obvious, but it’s a step too often overlooked.

It reminds me a bit of the early days of “big data.” Everyone wanted to collect massive amounts of data, but often without a clear idea of what they’d do with it. With AI, it’s the same: don’t just jump on the bandwagon because it’s new. Understand your specific use case. What’s the return on investment? How will you measure success? That clarity is essential before you commit resources.

Managing Risk and Ensuring Continuous Oversight

Deploying AI isn’t a one-time event; it requires ongoing vigilance. We need to plan thoroughly, establishing those guardrails from the outset. This means a proactive approach to risk management:

- Verifying Intent: Is the AI behaving as designed?

- Ensuring Accuracy: Are the results correct and reliable?

- Controlling Access: Is data being accessed and shared only by authorized parties?

- Ongoing Testing: Regularly test AI systems to ensure they’re producing the expected and appropriate outputs.

- Monitoring for Misuse: Actively look for unusual activity or attempts to inject harmful prompts.

This kind of continuous monitoring is crucial. You can’t just set it and forget it.

Diverse Approaches to AI Adoption

Organizations are approaching AI integration in different ways, each with its own set of considerations:

- Leveraging SaaS AI: Solutions that automate tasks like customer support can be incredibly efficient. They free up human employees for more complex, higher-value work. The key is to ensure these third-party services are secure and well-managed.

- Internal AI Tools (like Copilot): These tools can be transformative for productivity. However, they also introduce risks. For example, if employees accidentally create public links to sensitive internal documents, an AI chatbot could make it much easier for unauthorized users to discover that information. This highlights the need for robust data governance, responsible use training, and automated safeguards.

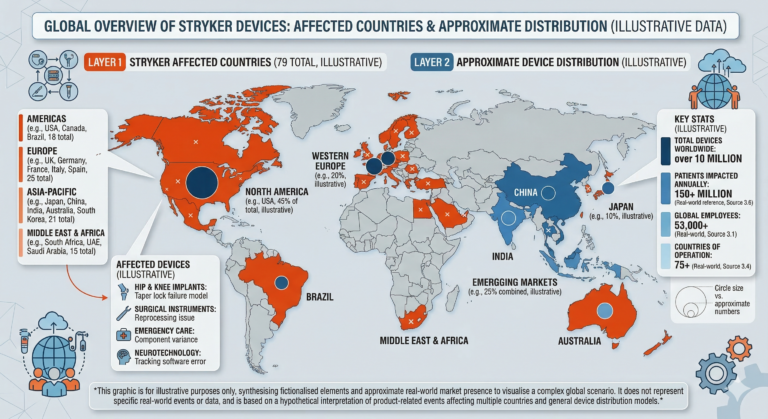

Scaling Risk and Addressing Hallucinations

Not all AI applications carry the same level of risk. Deploying AI for a highly sensitive task, like managing financial data, requires far more stringent controls than using it to brainstorm marketing ideas. It’s about creating a risk matrix and applying security measures proportionally to the potential impact.

A unique challenge with large language models is “hallucination”—when the AI confidently presents false information as fact. This isn’t just about human error; it’s an inherent aspect of how these models work. We need to account for this risk, especially in high-stakes applications, and use tools and processes to mitigate hallucinations.

The Promise of AI Frameworks

It’s encouraging to see the rapid development of AI frameworks from reputable organizations. Groups like NIST, ISO, and OWASP are providing essential guidance on AI risk management, security, and governance. This collaborative effort to establish common standards is vital. It means we’re not starting from scratch; we’re building on shared knowledge and best practices.

These frameworks reinforce the idea that AI shouldn’t be managed in a vacuum. It’s part of a larger ecosystem of technology and business processes, and it needs to be integrated into our existing security and governance structures.

The Broader Societal Impact of AI

The implications of AI extend far beyond the enterprise. We’re seeing how it’s affecting education, raising questions about how students learn and how we assess their knowledge. The shift isn’t to ban AI, but to teach people how to use it responsibly, to validate information, and to apply human judgment.

Perhaps even more profoundly, AI’s ability to persuade is a double-edged sword. While it can be used for good, it also presents a significant risk for social engineering and scams. We need to educate the public, especially those who may not be digitally native, about how AI-driven deception works. A good rule of thumb: if you feel pressured to make an urgent decision or to give up logic, it’s almost certainly a scam.

This is a dynamic and incredibly important area. We’re still learning, but by focusing on responsible development, strong governance, and continuous learning, we can ensure AI serves humanity well.